Social Robots

AI-Augmented PBL Workshops

A children's robotics workshop that taught systems thinking through emotional logic - and the scaling problem that shaped how I think about AI in education.

Overview

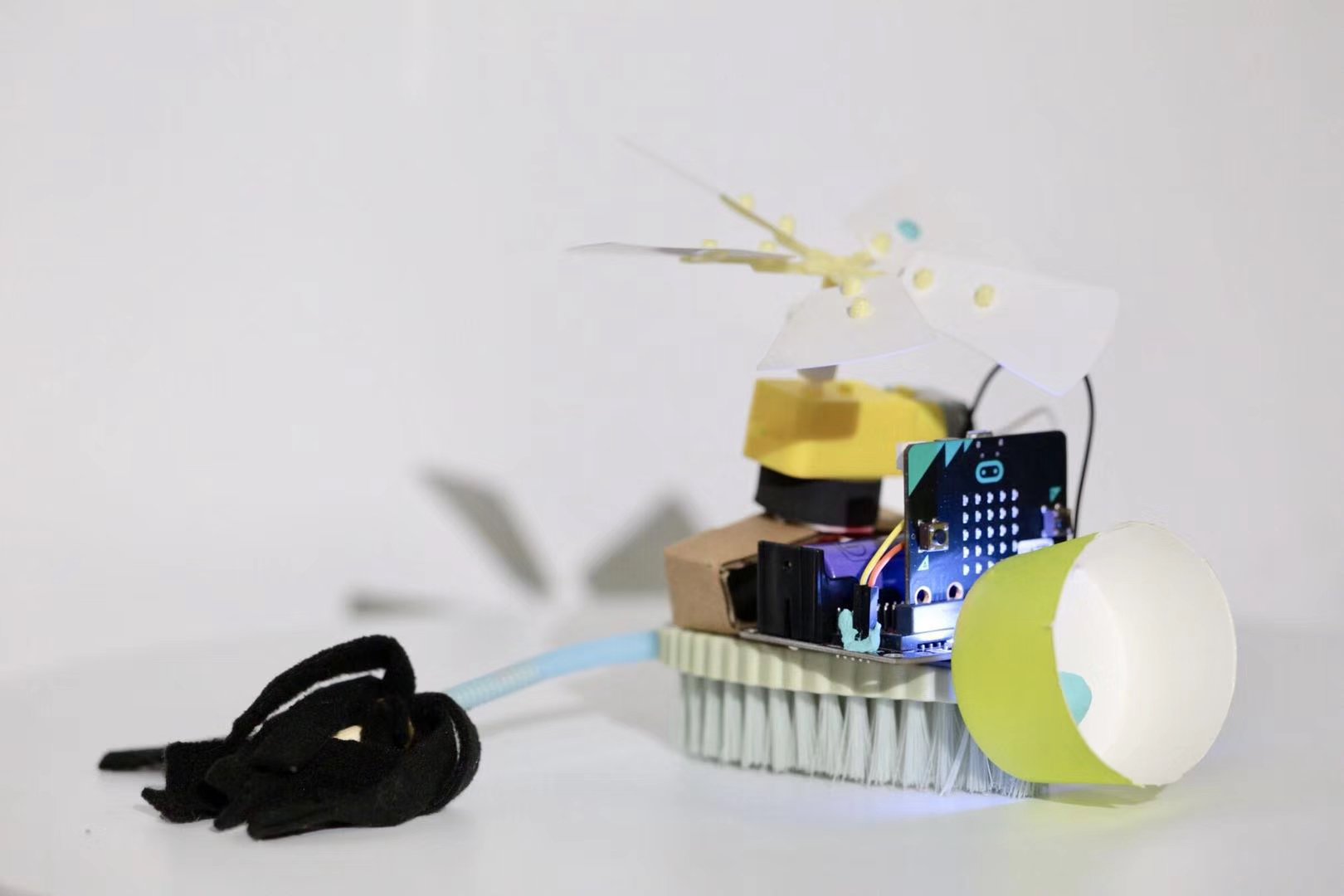

Emotional Robots was a summer workshop program (Squiggle Labs, Shanghai) where children aged 8–14 built robots with emotional personalities. Kids designed creatures that responded to sensor inputs with behavioral states: if the light sensor reads low AND the touch sensor is triggered, the robot feels scared and backs away.

The workshop wasn't about coding - it was about designing systems. And the scaling bottleneck it revealed directly shaped the AI pipeline architecture I build today.

What Made It Work

Structured scaffolding + open-ended creative divergence. The technical progression was logically sequenced — outputs first, then inputs and conditionals, then expanded outputs (motors/servos), then full integration. But within that structure, every child built something unique: their own robot personality, their own emotion logic, their own physical form.

Kids learned to think in terms of Input → State → Output, to iterate toward a vision, to debug by isolating variables, and to turn emotional ideas into computational logic.

The pedagogy was project-based learning (PBL) with deliberate scaffolding at every stage. Stop-motion storytelling and collaborative world-building provided narrative context. The studio-style environment emphasized high autonomy, social energy, low frustration, and iterative creation.

Observed outcomes:

Strong engagement - kids stayed late voluntarily

Low frustration spikes

High ownership of their projects

Iterative MVP-first mindset emerged naturally

Successful management of increasing complexity

The Scaling Problem

The bottleneck was never the curriculum or the kids — it was facilitator cognitive overload.

Running the workshop required a facilitator to simultaneously:

Model multiple evolving child-built systems in parallel

Context-switch constantly between different children's unique projects

Track each child's logic system and debug uniquely structured code

Adaptively calibrate challenge to stay within each child's Zone of Proximal Development (ZPD)

This is the same bottleneck that limits any high-quality hands-on learning experience: the thing that makes it good (adaptive, personalized scaffolding) is also the thing that makes it expensive and hard to scale.

Eight Scaffolding Meta-Protocols

I documented the facilitator's intuitive heuristics as eight structured meta-protocols - the "rules" a great facilitator follows without thinking about it:

1. Complexity Gating - Gradually unlock technical tools and logic layers. Prevent cognitive overload while maintaining stretch.

2. MVP Slicing - Reduce ambitious ideas into the smallest working version. Keep work inside the learner's ZPD.

3. State-Based System Modeling - Frame behavior as Input → State → Output. Build causal reasoning and system design thinking.

4. Simplification Before Addition - When instability occurs, reduce complexity before layering fixes.

5. Externalized Thinking - Move logic out of working memory and into visible form (rubber duck debugging, state diagrams, expected vs. actual comparison).

6. Ownership Preservation - Facilitator guides but does not take control. Ask-before-tell, verbal prompting, micro-wins celebration.

7. Motivational Anchoring - Use emotional engagement to regulate direction without collapsing scope.

8. Iterative Expansion - Success unlocks complexity. Gradual feature addition, version progression.

The AI Opportunity

An AI facilitator co-pilot could operationalize these protocols: tracking each child's project state, generating contextual scaffolding prompts, suggesting simplification strategies when a child is stuck, and helping less-experienced facilitators deliver the same quality of adaptive support that currently requires deep expertise.

The AI is backstage - the adult-child interaction remains central.

This is a direct parallel to the content pipeline problem: how do you encode expert judgment into AI-mediated systems so quality scales without degrading? In Squiggle Story, the expert judgment lives in grammar contracts and design decisions. In the workshop context, it lives in the scaffolding protocols. The pattern is the same.

The durable skill this workshop taught was never syntax or coding. It was:

Systems thinking - designing behavior through structured logic

Debugging mindset - isolating variables, testing hypotheses

Iterative constraint design - working within limits to create something new

Creative synthesis - bridging physical and digital, emotional and computational

AI may generate code. But structured system understanding - the ability to design, debug, and reason about complex interacting systems - remains scarce. The workshop was about building that capacity in children. The AI pipeline work is about encoding that capacity into production systems.

Strategic Reframing

Facilitator Co-Pilot MVP Direction

Phase B (near-term): Facilitator-facing tool. AI generates structured scaffolding prompts, suggests simplification strategies, helps isolate state logic, and reduces facilitator cognitive load.

The facilitator enters a child's goal → the system suggests an MVP slice → the facilitator receives scaffold questions → the child tests → the system recommends the next expansion.

Phase C (longer-term): AI-augmented creative platform. AI-assisted logic construction with visible, inspectable state models. Agent orchestration playgrounds. Multi-agent behavior modeling. Not "learn to code" - learn to design intelligent systems.